About

- Computer Vision Research Engineer at Musashi Auto Parts Canada (Musashi AI).

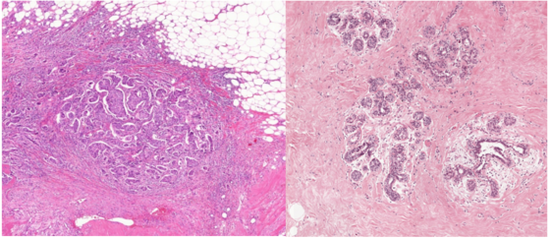

- Former Post-graduate Research Associate at Bell Multimedia Laboratory where I worked in eXplainable Artificial Intelligence (XAI) along with LG AI Research.

- Master’s graduate in Computer Vision and Robotics from the Department of Electrical and Computer Engineering (ECE) at the University of Toronto (Class of 2020).

- Detail-oriented Machine Learning (AI) Engineer with 3+ years of full-time work experience in backend software development, database management (SQL), and application support.

- Our paper on the novel XAI algorithm Semantic Input Sampling for Explanation was ACCEPTED and PRESENTED at AAAI-21 conference.

- Two new papers - Ada-SISE and Integrated Grad-CAM - ACCEPTED and PRESENTED at the IEEE ICASSP-21 conference.

Interests

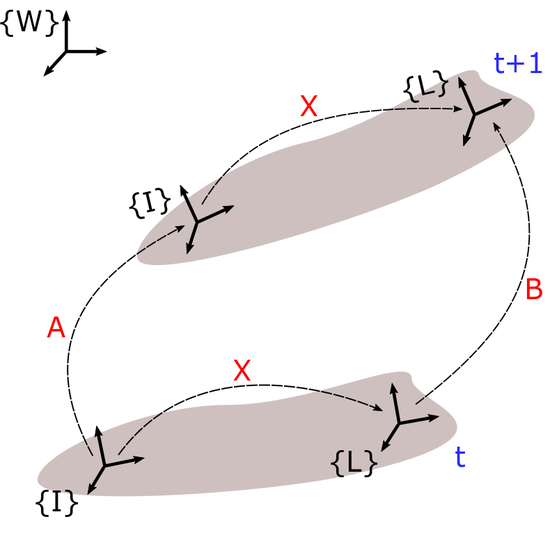

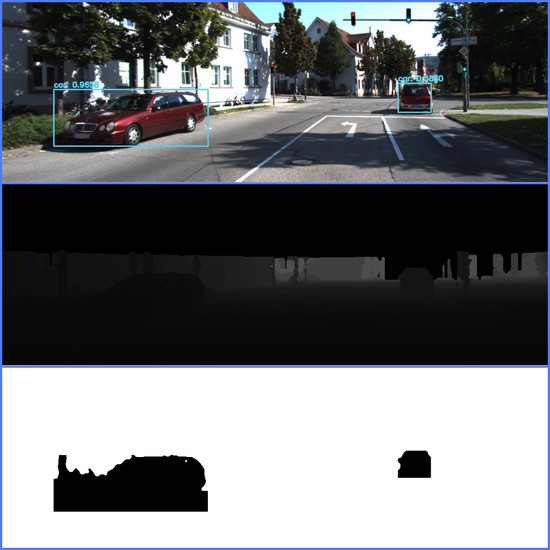

- Computer Vision

- Explainable AI (XAI)

- Robotics and Control

- Machine Learning

Education

M.Eng in Electrical and Computer Engineering, 2020

University of Toronto

B.E in Electrical and Electronics Engineering, 2016

Anna University