Object Detection and Instance Segmentation

Instance segmentation on stereo vision images of KITTI dataset, using YOLOv3 object detector and depth data.

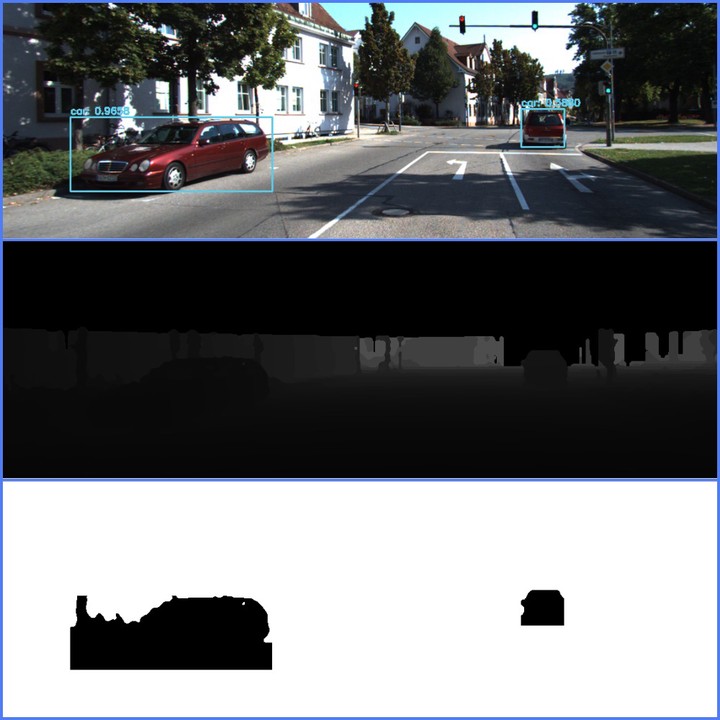

YOLO detections, calculated Depth and Segmentation mask

YOLO detections, calculated Depth and Segmentation mask- This assignment focuses on

2D object detection and instance segmentationusing the depth data. - Motivated by the KITTI vision challenge for object detection and tracking,

disparity mapbetween the corresponding images from left (p2) and right (p3) camera are provided. Depth mapfor all images are estimated using the given disparity and calibration information, and the results are attached along the submission as advised.- An off-the-shelf configured 2D object detection algorithm

YOLO v3pre-trained on COCO dataset is provided for direct implementation. - By tuning the confidence and non-maxima suppression threshold values, all cars in the frame of left image are detected and attached in this report.

- For each detection of objects with label ‘car’, the

average depthis calculated neglecting the values with zero depth. - Then a

heuristic wayfor instance segmentation is implemented, to calculate the range of depth values to be considered as that of car, based on the average depth. - More detailed information regarding the implementation is provided under each corresponding section.

NOTE: Please upgrade to the latest OpenCV version to avoid errors.

Kindly create new folders inorder to save results.

- data/test/est_depth

- data/test/yolo

- data/test/est_segmentation

The bounding boxes are saved as numpy arrays and accessed later during the instance segmentation process. So it’s imperative to follow step 1.